Empires of the mind.

Saturday, 28 October 2017

Joni Mitchell and me

A strange thing and wonderful thing happened the other day, I rediscovered the music of Joni Mitchell. And all this because of an article about the end of production of the Boeing 747! The article had a link to Joni Mitchell singing her song - Amelia. 747 history

It's been a long time since I first found her, about 1968 I think and undoubtedly hearing her first on John Peel's radio show, Top Gear.

At the time I was living at home and had not yet found my way. I had struggled at school and drifted into a nothing job in a local shop. Somehow or other I latched onto the folk sound of the early Joni Mitchell, I saw a great Joan Baez concert on the BBC around that time and Joni sounded similar. But I lived in an isolated rural community and beyond buying records I was going nowhere. Soon, though I had the good fortune to get fired from my nothing job and this finally kicked me into life. I joined the Air Force and started on the technical journey that has been my world ever since.

I bought several more Joni Mitchell records as soon as they were published. Joni's Clouds was published in 1969 and I joined the Air Force in September of that year. While Joni was exploring and singing about the counter culture of Southern California I was immersing myself in the conservative world of the British military.

But this was not all bad, despite pleading from the USA the British government of the day refused to get involved in the Viet Nam war. There was little danger of my new military career taking me into combat. I was free to learn engineering. And, in my time off, free to explore a little of the counter culture as far as it existed in the UK.

August 1970 saw me, Joni Mitchell and a totally breathtaking cast of massive stars at the Isle of Wight music festival. Isle_of_Wight_Festival_1970. I was a little conspicuous, with my military haircut and actually I was on my own. But my attachment to Joni Mitchell continued. I bought her third album, Ladies of the Canyon in 1970 but then, for a while, for reasons unclear, we parted!

Our paths would not cross again until the end of the 1980's when I met Cathy. Cathy is a musician and a Joni Mitchell fan and she owned the later albums from the 1970's including the magnificent, Hissing of Summer Lawns which I came to adore.

Joni released this album in 1975 and it is stunning in it's complexity. Songs like the Boho Dance continue to intrigue me 40 years later. Somehow, even with cryptic lyrics, she still creates little movies that run in ones head. Her wonderful voice, her perfect phrasing and the deep, multilayered textures of the music make listening to Joni a remarkable experience.

So it's poetry set to music! No, it's much more than that. The cadence and colour of the music provide much more than a reading. Joni is the master of subtle, multidimensional emphasis which allows one to keep finding more and more in the music.

Somehow, in Joni, fate has contrived to combine beauty, a remarkable voice and a huge writing talent for both words and music. And yet I'm sure even all that is not enough to make a world class star. Joni also has a will of iron, a will that kept her going after a childhood polio attack and kept her afloat and working in a business where so many fall to drugs and other phycological problems.

As the 1970's progressed Joni's music became more and more complex. Her fame gave her licence to experiment and she left some of her earlier fans behind. But for me Joni Mitchell now belonged to Cathy and when we parted I let Joni Mitchell go too. But relationships come and go but the music remains, waiting to be heard, when we are ready to find it again.

This lovely concert, Joni and James Taylor (from 47 years ago today) is introduced by John Peel. Here Joni is vocally at the height of her powers though her music and lyrics have not yet reached their full complexity. But that's OK, through her recorded works we can travel in time and follow the trajectory of Joni's remarkable talent and relive it as often as we want.

October 2017

Friday, 14 July 2017

Language as Information

I have finally, after more than eleven years in Germany, started to seriously study German. You might ask why it has taken me so long to get around to studying the language of the country I live in? I have to say I have no good answer to that. However, now that I’ve started, I’ve made a few observations on the topic and these are what follow.

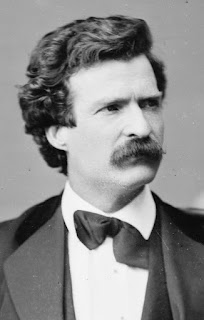

Many years ago Mark Twain wrote a brilliant, humorous essay where he berated the German language for complexity of its grammar. This essay has probably been used as a source of excuses, ever since, by thousands of struggling students of German.

It’s sometimes said that people tend to be either be good at languages or good at mathematics. But what are the differences? Mathematics and physics are only considered to be properly defined when a set of explicit, repeatable rules exist. But the language text books are full of the rules of grammar and probably the biggest challenge in learning a language is learning to ‘internalise’ grammatic structure.

And structure is crucial to communication. In 1948 Claude Shannon produced a milestone study of communication theory, ‘A Mathematical theory of Communication.’ While this was primarily concerned with telecommunications theory - formalising and creating a rule set in order to improve the quality of electronic communication. But some of Shannon’s observations have since been generalised and applied to other forms of communication including spoken and written language.

All communication systems are subject to ‘noise’. In this sense ‘noise’ is interference or corruption of the intended message. Anyone who has tried to follow a discussion on a weak radio channel is aware of the implications of noise. But over the years communications systems have improved because techniques of reducing the effect of noise have been devised.

The protocols of effective and accurate communications require a level of redundancy in the creation of messages. Codes converting letters into numbers have been in use since the 16th century but it was with the invention of the electrical telegraph that message accuracy fell into the province of engineers.

The accuracy of telegraph message communication was greatly improved by adding ‘parity’ bits. By imposing the rule that all alphabetical characters must be encoded with an even number of bits it meant that noise errors that either added or remove a bit during message transmission could be easily detected. The message content was unchanged by these parity bits, they provide only a means of knowing if the message has been received accurately or not. In the event of errors a repeat of the transmission could be requested.

Grammar in human communications performs a similar function. If we have two short sentences:

There is one dog.

There are two dogs.

The fact that the first sentence is speaking of dogs singular is apparent from use of is and the lack of s on the end of dog and the fact that we speak of one. In the second sentence we speak of dogs plural and have the are and the s and the two to confirm that we now refer to more than one dog.

If all communication were to take place in perfect conditions one might argue that two constructions are not needed, the second construction could always be used. Thus, “There are one dogs.” Of course, to an adult native english speaker this introduces a level of cognitive dissonance. We immediately question what we’ve heard and usually request a re-transmission - "excuse me?". Because communications conditions are rarely perfect humans seem to have evolved language in such a way as to support reliable and accurate communication by emphasising the difference between singular and plural. The spoken language has redundancy.

Grammar imposes many more rules. And German grammar, as Twain so memorably recounts, is greatly preoccupied with emphasising the gender of nouns and the four cases: nominative, accusative, dative and genetive. Together the gender of the noun and the usage of the noun in the sentence contrive to modify, amongst other things, the definitive article - THE.

If a sentence such as, ‘The girl gives the teacher the apple’ is translated to German we find three different expressions of the definitive article. : Das Mädchen gibt dem Lehrer den Apfel.

Das Mädchen, the girl (gender neutral, case nominative).

Dem Lehrer, the teacher (gender masculine, case dative).

Den Apfel, the apple (gender masculine, case accusative).

The different expressions of THE add redundancy to the sentence. Even without thinking about the verb too much the proficient listener knows that das Mädchen is the subject of the sentence and den Apfel is the object. The rather unfortunate thing is that the whole construction is made more complicated by needing to consider the genders of nouns. And the choice of gender is, I believe, largely arbitrary.

Native speakers have learned in early childhood how to correctly apply all the rules of whatever language they are born to. They soak up all the genders and internalise the context so that the correct version of the definitive article is used without conscious thought, without any formal expression of rules. For adult students of a foreign language it’s different. There’s vocabulary to learn and one must learn enough about grammar and the structure of sentences to be able to parse and deconstruct the sentences we hear in ‘real-time’.

Through copying it becomes possible to speak original sentences of our own. German is easier to pronounce than english, the sounds the various letters make consistent. Learning pronunciation can be difficult as the larynx frequently needs to learn how to produce different sounds than it is accustomed to but once that’s done you are on your way.

But why are some people better at mathematics and some at languages? I think this relates to how these different skills must be learned.

Algebra, for example, is also concerned with the manipulation of symbols according to a set of rules. The difference here being that the ruleset is much smaller than any spoken language ruleset and is totally consistent. The mathematics student learns the structure and the rules and then applies it. It takes practice and rigorous adherence to process. But there’s no need for the algebra student to follow through the workings of the solutions to various problems, he can solve successively more complex equations using always the same rules. And the answer, once it's been found, doesn't change. Algebra requires a simple ruleset that can be applied repeatedly to numerous different problems.

Achieving proficiency in language is different. One may, for example, hear a sentence and know only 75% of the words with certainty and many words do not have a one-to-one equivalent in your native tongue. If you hear the same sentence again you may figure out more as you recognise a long compound word or a noun derived from a verb that you know. Hear the sentence yet again and you may fill in still more of the blanks. In fact you may settle on the meaning of a sentence and hear it again a month later and have a new insight into its subtlety, perhaps now you recognise an undercurrent of irony that you missed before. Through these iterations the sentence hasn’t changed but you have!

By now you may even feel confident enough to express an opinion on the matter. If you are lucky you’ll have enough words in your vocabulary to express what you want to say. In some ways speaking for yourself is a little easier, you are able to work with the words you know which is not the case when listening.

So, if you are the type of person who only feels comfortable with a limited, highly specific ruleset that you are sure you have completely internalised then mathematics is the topic you will learn more easily. If, on the other hand, you are prepared to ‘have a go’ on the strength of incomplete data, and be prepared to commit to a solution on the strength of it, languages will suit you better.

There is a difference between what is known and what can be expressed. You may know how to ride a bicycle but try using english or any other language to tell someone else how to do it. But could it be that some spoken languages are just better for communication than others?

Such matters might be analysed mathematically by evaluating how much of a spoken language is given over to redundancy and how much to carrying the primary information, the payload. As the communication environment changes the necessity for high levels of communication redundancy may change. Perhaps less redundancy is needed. Then certain language characteristics may become just be an unnecessary burden. Like the human appendix, an organ that exists but no longer serves any useful function.

Perhaps the gender of nouns, which appear to carry zero information while imposing complexity to no purpose, may do nothing more than impose a ‘clunkiness’ to the language that actually stands in the way of effective communication.

While not wishing to supply nationalistic chauvinism with any more ammunition, could it be that some human languages are just better than others? In literature and in popular music the english language dominates. Is this merely down to widespread usage or might it be attributed to the inherent efficiency of the english language as a means of expression?

For example, there's a famous english sentence that summarises the dilemma of human existence in ten simple words. Shakespeare asked what we are to make of human evolutions greatest achievement - consciousness.

He poses the question, this remarkable attribute, consciousness, with the pain that self-knowledge brings, is it worth the price? Or, as the man himself put it, “To be or not to be. That is the question.”

For this post I've shamelessly plagiarised these wonderful books. https://en.wikipedia.org/wiki/Grammatical_Man

Many years ago Mark Twain wrote a brilliant, humorous essay where he berated the German language for complexity of its grammar. This essay has probably been used as a source of excuses, ever since, by thousands of struggling students of German.

It’s sometimes said that people tend to be either be good at languages or good at mathematics. But what are the differences? Mathematics and physics are only considered to be properly defined when a set of explicit, repeatable rules exist. But the language text books are full of the rules of grammar and probably the biggest challenge in learning a language is learning to ‘internalise’ grammatic structure.

And structure is crucial to communication. In 1948 Claude Shannon produced a milestone study of communication theory, ‘A Mathematical theory of Communication.’ While this was primarily concerned with telecommunications theory - formalising and creating a rule set in order to improve the quality of electronic communication. But some of Shannon’s observations have since been generalised and applied to other forms of communication including spoken and written language.

All communication systems are subject to ‘noise’. In this sense ‘noise’ is interference or corruption of the intended message. Anyone who has tried to follow a discussion on a weak radio channel is aware of the implications of noise. But over the years communications systems have improved because techniques of reducing the effect of noise have been devised.

The protocols of effective and accurate communications require a level of redundancy in the creation of messages. Codes converting letters into numbers have been in use since the 16th century but it was with the invention of the electrical telegraph that message accuracy fell into the province of engineers.

The accuracy of telegraph message communication was greatly improved by adding ‘parity’ bits. By imposing the rule that all alphabetical characters must be encoded with an even number of bits it meant that noise errors that either added or remove a bit during message transmission could be easily detected. The message content was unchanged by these parity bits, they provide only a means of knowing if the message has been received accurately or not. In the event of errors a repeat of the transmission could be requested.

Grammar in human communications performs a similar function. If we have two short sentences:

There is one dog.

There are two dogs.

The fact that the first sentence is speaking of dogs singular is apparent from use of is and the lack of s on the end of dog and the fact that we speak of one. In the second sentence we speak of dogs plural and have the are and the s and the two to confirm that we now refer to more than one dog.

If all communication were to take place in perfect conditions one might argue that two constructions are not needed, the second construction could always be used. Thus, “There are one dogs.” Of course, to an adult native english speaker this introduces a level of cognitive dissonance. We immediately question what we’ve heard and usually request a re-transmission - "excuse me?". Because communications conditions are rarely perfect humans seem to have evolved language in such a way as to support reliable and accurate communication by emphasising the difference between singular and plural. The spoken language has redundancy.

Grammar imposes many more rules. And German grammar, as Twain so memorably recounts, is greatly preoccupied with emphasising the gender of nouns and the four cases: nominative, accusative, dative and genetive. Together the gender of the noun and the usage of the noun in the sentence contrive to modify, amongst other things, the definitive article - THE.

If a sentence such as, ‘The girl gives the teacher the apple’ is translated to German we find three different expressions of the definitive article. : Das Mädchen gibt dem Lehrer den Apfel.

Das Mädchen, the girl (gender neutral, case nominative).

Dem Lehrer, the teacher (gender masculine, case dative).

Den Apfel, the apple (gender masculine, case accusative).

The different expressions of THE add redundancy to the sentence. Even without thinking about the verb too much the proficient listener knows that das Mädchen is the subject of the sentence and den Apfel is the object. The rather unfortunate thing is that the whole construction is made more complicated by needing to consider the genders of nouns. And the choice of gender is, I believe, largely arbitrary.

Native speakers have learned in early childhood how to correctly apply all the rules of whatever language they are born to. They soak up all the genders and internalise the context so that the correct version of the definitive article is used without conscious thought, without any formal expression of rules. For adult students of a foreign language it’s different. There’s vocabulary to learn and one must learn enough about grammar and the structure of sentences to be able to parse and deconstruct the sentences we hear in ‘real-time’.

Through copying it becomes possible to speak original sentences of our own. German is easier to pronounce than english, the sounds the various letters make consistent. Learning pronunciation can be difficult as the larynx frequently needs to learn how to produce different sounds than it is accustomed to but once that’s done you are on your way.

*

But why are some people better at mathematics and some at languages? I think this relates to how these different skills must be learned.

Algebra, for example, is also concerned with the manipulation of symbols according to a set of rules. The difference here being that the ruleset is much smaller than any spoken language ruleset and is totally consistent. The mathematics student learns the structure and the rules and then applies it. It takes practice and rigorous adherence to process. But there’s no need for the algebra student to follow through the workings of the solutions to various problems, he can solve successively more complex equations using always the same rules. And the answer, once it's been found, doesn't change. Algebra requires a simple ruleset that can be applied repeatedly to numerous different problems.

Achieving proficiency in language is different. One may, for example, hear a sentence and know only 75% of the words with certainty and many words do not have a one-to-one equivalent in your native tongue. If you hear the same sentence again you may figure out more as you recognise a long compound word or a noun derived from a verb that you know. Hear the sentence yet again and you may fill in still more of the blanks. In fact you may settle on the meaning of a sentence and hear it again a month later and have a new insight into its subtlety, perhaps now you recognise an undercurrent of irony that you missed before. Through these iterations the sentence hasn’t changed but you have!

By now you may even feel confident enough to express an opinion on the matter. If you are lucky you’ll have enough words in your vocabulary to express what you want to say. In some ways speaking for yourself is a little easier, you are able to work with the words you know which is not the case when listening.

So, if you are the type of person who only feels comfortable with a limited, highly specific ruleset that you are sure you have completely internalised then mathematics is the topic you will learn more easily. If, on the other hand, you are prepared to ‘have a go’ on the strength of incomplete data, and be prepared to commit to a solution on the strength of it, languages will suit you better.

*

There is a difference between what is known and what can be expressed. You may know how to ride a bicycle but try using english or any other language to tell someone else how to do it. But could it be that some spoken languages are just better for communication than others?

Such matters might be analysed mathematically by evaluating how much of a spoken language is given over to redundancy and how much to carrying the primary information, the payload. As the communication environment changes the necessity for high levels of communication redundancy may change. Perhaps less redundancy is needed. Then certain language characteristics may become just be an unnecessary burden. Like the human appendix, an organ that exists but no longer serves any useful function.

Perhaps the gender of nouns, which appear to carry zero information while imposing complexity to no purpose, may do nothing more than impose a ‘clunkiness’ to the language that actually stands in the way of effective communication.

While not wishing to supply nationalistic chauvinism with any more ammunition, could it be that some human languages are just better than others? In literature and in popular music the english language dominates. Is this merely down to widespread usage or might it be attributed to the inherent efficiency of the english language as a means of expression?

For example, there's a famous english sentence that summarises the dilemma of human existence in ten simple words. Shakespeare asked what we are to make of human evolutions greatest achievement - consciousness.

He poses the question, this remarkable attribute, consciousness, with the pain that self-knowledge brings, is it worth the price? Or, as the man himself put it, “To be or not to be. That is the question.”

*

Saturday, 14 January 2017

Memes and Stories

How simple, emotional driven stories have replaced considered political discussion.

It seems that many people now get ALL their news from social media sources such as Facebook. facebook-twitter-really-people-get-news The following then is rather disturbing.

Shortly after the 2016 US Presidential election it became clear that websites hosted in Macedonia had received huge amounts of traffic from Facebook. Supporters of Donald Trump had followed Facebook links to fake stories. FT, Fake News The authors of these sites used multiple identities to ‘share' numerous links to their sites.

It seems that many people now get ALL their news from social media sources such as Facebook. facebook-twitter-really-people-get-news The following then is rather disturbing.

Shortly after the 2016 US Presidential election it became clear that websites hosted in Macedonia had received huge amounts of traffic from Facebook. Supporters of Donald Trump had followed Facebook links to fake stories. FT, Fake News The authors of these sites used multiple identities to ‘share' numerous links to their sites.

The people creating these sites, usually using material copied from elsewhere but with more outrageous headlines attached, took no ideological sides in the political debate. They, like numerous newspaper proprietors before them, were just maximising revenue. Google AdSense counts the clicks these sites receive and the operators make an income which is good by Macedonian standards. The popularity of these 'Fakebook' sites has created considerable alarm in Germany where there are fears that the 2017 German elections will be influenced by something similar.Facebook fact checking.

In 1976 Richard Dawkins coined the term meme. Memes are a cultural analog of genes. Memes are chunks of information which can replicate within a suitable environment. And memes, like genes, are meaningless and helpless on their own. Memes need to be associated with some kind of story in order to gain traction. They need a 'hosting ideology’, or narrative to engage with which they then support. Memes

In the same way that genes mutate to enhance the survival characteristics of their physical hosts an effective meme will enable its 'hosting' ideology to develop and reproduce. Memes that replicate most effectively enjoy more success, and they enable the reproduction of their 'hosting ideology’. In Dawkins' telling the world's religions are the 'hosting ideologies' of memes. But there can be many more.

In the same way that genes mutate to enhance the survival characteristics of their physical hosts an effective meme will enable its 'hosting' ideology to develop and reproduce. Memes that replicate most effectively enjoy more success, and they enable the reproduction of their 'hosting ideology’. In Dawkins' telling the world's religions are the 'hosting ideologies' of memes. But there can be many more.

The brain tries to find patterns in chaos. The British film maker Adam Curtis has suggested that our interpretations of the news derive from a need to create narratives to explain the chaos of daily events. This is a tendency to see the news as a story unfolding towards a goal. Journalists themselves speak of their work as telling or breaking stories and such stories need good guys and bad guys. HyperNormalisation

History is related in terms of such stories. A common narrative for the Second World War describes a battle between good and evil. Everything the Germans did was evil and everything the Allies did was proper and in support of the final justified victory. The Holocaust was an act of evil consistent with this narrative. But the indiscriminate bombing of the civilian population, by the Allies is not. Therefore, it tends to be dismissed, at least in most English language tellings of the story.

Such historical narratives may also be written to satisfy the needs of later times. Churchill’s History of the Second World War, which largely ignores the part of the Soviet Union in defeating Hitler, supported Churchill’s almost simultaneous efforts to warn of the threat of the communism. Iron Curtain Speech

The desire to see events in terms of simple narratives is exploited by the politically ambitious. The British EU referendum was portrayed as a struggle between a bunch of plucky, everyman campaigners and an elite who had surrendered Britain’s democratic process to the self serving bureaucrats of Brussels. The slogan, ‘Take Back Control’ became a meme within this narrative. The story portrays the people who supported Brexit as David struggling to defeat the Goliath of Brussels bureaucracy.

Another meme spoke of the evils of multiculturalism. In the Brexit telling multiculturalism was part of the EU conspiracy. A folly contrived by the left leaning elite who imagined that all races are capable of living together. It ignored the fact that the multicultural history of Britain is actually founded on imperialism. Immigrants from the former British Empire colonies had been invited to Britain as a cheap labour force since well before the European Union was conceived. A similar meme has been coopted by Trump for his campaign. It ignores the fact that the forefathers of the black population were forcibly transported there.

Climate Change has been incorporated into another good guys versus bad guys story. Donald Trump has promoted the tale that Climate Change is a myth masterminded by the Chinese in order to make US industry uncompetitive. Others, such as the British journalist James Delingpole, have portrayed Climate Change as a conspiracy of the scientists themselves who are deceiving the public in order to serve their own sinister and miserable ends.

Donald Trump spent the Presidential campaign looking for positive feedback. Picking up on a few contrarian positions he has done little beyond chasing public approval. His actual policies are hardly discussed. The Trump story is the plucky outsider taking on the establishment. The David and Goliath story. And so Trump managed to persuade people who normally don't vote to go out and vote for him.

The creators of those Macedonian websites were simply adding material to an ongoing narrative, creating confirmation bias for those backing Trump. The Trump story is simple. It has no need or room for facts. Such stories allow people to engage with issues on an emotional level then all the difficult historical and technical details can be ignored.

Every week a new chapter emerges, for example, Trump is offended by an Hollywood actor. Trump insults her right back. Then, the more the left is outraged the more courageous the Trump/David is being towards the Goliath of the establishment.

Once the audience is engaged emotionally it really doesn’t matter how implausible the plot is. As long as you can keep the conflict going the audience will continue to follow and continue to cheer for the good guys. No matter how improbable good guys they may be.

Here's an excellent article from Laurie Penny on the subject.

fake-news-sells-because-people-want-it-be-true

Every week a new chapter emerges, for example, Trump is offended by an Hollywood actor. Trump insults her right back. Then, the more the left is outraged the more courageous the Trump/David is being towards the Goliath of the establishment.

Once the audience is engaged emotionally it really doesn’t matter how implausible the plot is. As long as you can keep the conflict going the audience will continue to follow and continue to cheer for the good guys. No matter how improbable good guys they may be.

Here's an excellent article from Laurie Penny on the subject.

fake-news-sells-because-people-want-it-be-true

Wednesday, 14 December 2016

Why Nationalism?

This year thousands of words have been written trying to rationalise the rise in popularity of such figures as Donald Trump. Why did a billionaire gain so much popularity among working class people?

"He tells it like it is."

Lets take a look at Britain and a famous part of television history which I think is overdue for a comeback. He is a shorthand for a group that the Scots call 'Tenement Torys' and the English and Americans, strangely, don't have a name for. This is the TV character, Alf Garnett.

"He tells it like it is."

Lets take a look at Britain and a famous part of television history which I think is overdue for a comeback. He is a shorthand for a group that the Scots call 'Tenement Torys' and the English and Americans, strangely, don't have a name for. This is the TV character, Alf Garnett.

As a working class conservative Alf is proud of the Flag, the Queen and the British Empire, Alf Garnett, with his collection of angry prejudices was hugely popular on the TV screens of the 1960s and 1970s. He proved to be an archetype with, eventually, counterparts on American and German television. But why? We expect the Conservatives to get their support from the moneyed class. But Conservative support has long been found within the working class. Why do some working class voters, like Alf Garnett, vote for the party which is most likely to exploit them?

Alf is proud of his Britishness. National identity and conservatism have a natural fit. Nationalism fits right in with Trump and his, 'Make America Great Again." But why is national identity so important to Alf and his counterparts around the world?

Social identity is crucial to self esteem. At the height of our working lives we are members of many actual, functional social groups. We can be professionals - doctors, engineers etc, and parents. We will be children ourselves with living parents, as well as belonging to whatever ethnic group or nation.

As we grow older this changes. We retire and loose our professional identities, our children become independent, our own parents die. Our range of social identities reduces. At the end we may be left with just one significant group to indisputably call our own - our national identity.

People lower down the social ladder never had many social identities in any event. Probably they were not members of profesional groups. And perhaps their roles as parents/children were not that binding. They could just cite membership of their national identity as being white and British.

If we just happen to be in such a small and retreating social group then the possibility that it might be diluted is painful. And these same people, who rail against migrant ethnic groups who refuse to assimilate, cannot accept that change is inevitable. But change, to our physical or social environment, is inevitable. And we deal with change by evolving. Evolution is good for a species - eventually. It tends to be painful while its happening. So there is much political capital to be made by offering an alternative to change.

But today's politics, exploiting the anger of white working/middle class people will eventually dry up. There's a demographic bulge, all those retired baby boomers, who's fears are ripe to be exploited. Would Alf Garnett have voted for Brexit or Trump? You can bet your life he would. And there's a lot more like him right now, there's a window of opportunity open but, I think, it won't stay open for ever.

Here's another take on the topic.

Saturday, 19 November 2016

Brexit and Trump

Are there similarities between the UK's Brexit and the mindset that gave the USA Trump?

Of course there are. Both the Brexit campaign and theTrump campaign found new voters among those previously disenfranchised. Many of these people had not bothered to vote before because all the candidates seemed to amount to the same thing. These people discovered a new sort of politics they could understand, something they felt was worth going out and voting for.

Both campaigns promoted the idea that the established political parties were in thrall of an elite - a combination of established media and authority who had conspired to leave ordinary people baffled, poor and helpless. But now there was a new, straightforward option available.

The appeal of one button to fix all problems is huge and everyone wants to believe that there are answers to long standing problems. But in classic storytelling changes to the old order must produce losers as well as winners. For the new narratives of Trump and Brexit to appear plausible the campaigns had to explain who the new losers would be. And these losers would be outsiders.

For Brexit the losers will be the EU. Those faceless bureaucrats who have, it is claimed, taken over so much control of life in Britain. Post Brexit Britain will withdraw its funding to the EU so the new losers will not be the native British. There will also be an end to all those migrants who come to Britain to steal jobs, live on benefits and bring in new, non British values and customs. There will be justice! And now someone else will suffer.

The Trump campaign, with no EU to focus on, took a slightly different tack. In the USA there are also outsiders who will be punished, migrants and foreign countries who will have to pay. Those countries, such as Europe, are the ones, it was claimed, who have long had a free ride at the expense of the USA (NATO being largely funded by the USA). And the Trump campaign dug further into the various conspiracy theories that the conservative press have promoted. Climate change is, according to Trump, promoted by the Chinese in order to make American industry ineffective.

Both campaigns, while claiming to reject a media that has long conspired with the mainstream political elite, actually depended on support from new media. Raheem Kassam, who is part of Nigel Farage's UKIP team is now head of Breitbart London. Stephen Bannon, who ran Trump's campaign was formerly the Executive Chairman of Breitbart News. Breitbart News (USA). Bannon is now being described as chief strategist for Trump's new presidential administration. Breitbart is also planning to expand into France and Germany to exploit the nationalist waves there. Monetizing Nationalism.

In the UK large sections of the traditional print media, the Sun and the Daily Mail took up for Brexit. These outlets often used ideas and slogans drawn from the new media. And at the top of the food-chain the Daily Telegraph provided a less febrile rationalisation for Brexit.

These new media reports are created by professionals. But a few minutes looking at internet forums, even Facebook posts, reveal that the kind of rhetoric that gets hashed up in the likes of Breitbart and the tabloids has entered common use.

www.pprune.org is a mainly aviation website. It carries a section called Jet Blast which has become largely an echo chamber for the right wing media. Internet forums such as this constitute a kind of community, mainly populated by retired people, where like-minded souls congregate and rehearse their arguments and rationalisations.

The new media has provided a rhetoric which serves in place of actual discussion. For example, 'Project Fear', is the label used, and still rolled out, whenever a potential downside to Brexit is described. Such labels are not arguments but conversation stoppers that prevent the need to actually consider the point being made. On forums like pprune anyone can participate in a form of debate which consists mainly of copy/pasting slogans and clips from online media.

The new media has provided a rhetoric which serves in place of actual discussion. For example, 'Project Fear', is the label used, and still rolled out, whenever a potential downside to Brexit is described. Such labels are not arguments but conversation stoppers that prevent the need to actually consider the point being made. On forums like pprune anyone can participate in a form of debate which consists mainly of copy/pasting slogans and clips from online media.

The expression that these new medias provide has brought marginal and improbable arguments into the mainstream. Responsible media must find time, in the interests of balance, to cover these views. Such coverage then gives a kind of authority to contrarian opinions on such solid, proven issues as climate change. Lay people can be left with the view that even hard science is worth no more than personal preference.

This, somehow, has mobilised the disenfranchised who voted for Brexit and Trump.

But after all the campaigning what happens next? What happens when their supporters discover that Trump and Brexit cannot deliver. Do they shrug and let the old order reestablish itself?

Somehow I think not. There is a modern era precedent for the populism of Farage and Trump. This comes in the form of Silvio Berlusconi, another man who made grand promises and failed to deliver. He presented himself has a non-establishment figure who would challenge the political elites.

Every time Berlusconi failed to deliver on his promises he made new ones and the public forgave him. The fact that he controls 90% of the Italian media doubtless helped.

And so it will be with the promises of Brexit and Trump. When Brexit fails to improve the lot of those who came out to vote for it, it'll be the EU's fault or perhaps the government that lost its nerve. When Trump fails to build his wall or lock up Hilary Clinton there'll be new excuses and new promises to distract and enthuse his supporters.

And why not? Berlusconi managed to keep it going for nine years. Will the British and the Americans be less gullible than the Italians? We shall see.

This article from Luigi Zingales describes the Berlusconi/Trump phenomena and how it might be dealt with. the-right-way-to-resist-trump

This article from Luigi Zingales describes the Berlusconi/Trump phenomena and how it might be dealt with. the-right-way-to-resist-trump

Friday, 30 September 2016

Tobacco, petroleum and sugar, so what do they have in common?

All three are industrial concerns that have over the last hundred years have enjoyed huge, international markets. And all three pose a significant hazard to human health.

The tobacco industry peaked in the late 20th century, although the hazards of smoking had been known since the 1930s. Eventually, of course the health hazards of smoking were indisputable. The battle against petroleum is still being fought with most of us stuck as ‘users’ of a product that causes environmental damage through excessive CO2 in the atmosphere (causing global warming and oceanic acidification). And the direct health hazards of burned petroleum exhaust, especially from diesels, which are carcinogenic.

It’s not been enough to simply inform people of the hazards. The tobacco and petroleum industries have fought back with disinformation campaigns that have sought to throw doubt on the science identifying these hazards. In fact, a major secondary industry has been created to generate doubt about the actual hazards of smoking and the reality of man-made climate change. In my earlier blog I looked into the techniques being used by the tobacco and petroleum industries to protect their market share. engineering-consent

There’s another industry which is determined to maintain market share despite clear health hazards associated with its consumption. That is the sugar industry.

Over the last 50 years obesity has become a major health hazard. The obesity epidemic started in the USA and has spread across the world as different societies have adopted American dietary habits. Now, even third world countries that are barely producing enough food to support their populations have obesity problems.

Much of the trouble started in the 1970s when nutritionists were persuaded that foods containing fat were bad. In response, the food ‘manufacturing’ industry started to produce low fat products.

However, these new ‘manufactured’ food products needed to be made palatable because low fat foods are not tasty. Low fat foods generally have sugar added to them to restore some taste. Additionally, manufactured foods usually have the ‘fibre’ removed. This is to produce foods with a longer shelf life. The combination of added sugar and low fibre is catastrophic.

*

In order to understand the problem with sugar and refined carbohydrates we need to look at how the body digests food. The digestive process turns food into sugar which is carried around our body by the blood stream. The blood stream is the distribution system for the energy that we take on board as food. Between meals our blood sugar level drops slowly as the normal process of maintaining the muscles and other organs is carried out.

The body has a mechanism to regulate the blood sugar level. When the blood sugar level falls sufficiently we feel hungry and go looking for food.

It is now known that much of the problems of obesity come from so called, refined carbohydrates. These are the easily digested sugars and starches. In refined carbohydrates the natural fibres, and thus much of the nutrient value, has also been removed. Because the fibre has been removed these foods are digested very quickly.

When refined carbohydrates are consumed our blood-sugar increases abruptly. Some of the sugar in the blood is immediately used by the muscles and other organs but the pancreas responds to the abrupt increase in blood-sugar by producing large amounts of insulin. The increased insulin converts some of the blood-sugar into new fat. This is the natural process. But because the blood sugar level increased so abruptly rather more insulin is produced than is required to merely restore the blood sugar level to normal. The blood sugar falls below normal.

The ‘control system’ for the blood-sugar level has overreacted. The new, low blood sugar level causes loss of energy and renewed feelings of hunger. This is very undesirable! The body has just converted some of our meal into fat and yet we are still hungry! So we crave more food and if we try and satisfy this with more refined carbohydrates the ensuing spike in blood sugar level will cause a further burst of insulin from the pancreas. More fat will be stored and the blood-sugar level will fall again and another hunger, low energy cycle will be induced.

And so it goes on. The cycles of low blood sugar keep causing feelings of hunger and lack of energy. The final result being we take on more calories than we need.

*

All this was understood many years ago. In the 1970s a British scientist called John Yudkin was one of the first critical voices speaking out against the dangers of sugar. He wrote a book called, Pure White and Deadly.

It was at this time that the sugar and manufactured foods industry started to promote the position that it was not sugar but fat that was the primary cause of heart problems. In 1967 three Harvard scientists were sponsored by something called the Sugar Research Association (a lobby group for the North American sugar industry) to publish a review of research on sugar, fat and heart disease. This report played down the effects of sugar and emphasised the role of fat in heart disease. In 1970 The Seven Countries Study was published. This was also a highly questionable report aimed at deflecting the blame for obesity away from sugar.

These and other sponsored studies ignored the science of how sugar is metabolised and turned into fat. They were all highly critical of foods containing fat.

Today standard governmental health advice is still to reduce caloric intake by eating less and to increase caloric expenditure by exercising more. This ignores the role of refined carbohydrates which stimulate the production of extra insulin and, as I've described above, cause the production of fat even under conditions of regular exercise and reduced food consumption. (It should be said that regular exercise is valuable as it does reduce stress and this reduces the desire to over eat.)

Yudkin’s study from 1970, and more recent firmly based scientific accounts have still not been fully endorsed by the great public health institutions. Britain’s NHS and the public health advisors in the USA and other countries are influenced by the powerful food who use sugar and related products such as corn syrup in huge quantities. Thus, as we have seen with cigarettes and hydrocarbon fuels, solid scientific arguments highlighting the danger to public health are being by challenged by powerful vested interests, and using much the same techniques.

See, http://www.uctv.tv/skinny-on-obesity/ From the university of California for a very comprehensive analysis of the causes of obesity in general and in particular the role of sugar in diet.

Tuesday, 23 August 2016

Hover Slam

One of the most fascinating developments in space technology is the new art of controlled recovery of first stage launchers. A new technique, developed by SpaceX, promises to reduce the cost of access to space considerably. It's got the Big Aerospace competition worried and it's also put the USA back on top when it comes to being demonstrably masters of cutting edge technology.

The spaceflight pioneers of the 1940's and on had no use for recoverable launchers. Manned spaceflight technology was derived from nuclear missile technology and there was never any question of reusing a launcher in the case of a nuclear missile.

When the Space Shuttle was developed, the two solid fuel boosters were recovered by parachute and had to be fished out of the sea and then subjected to an expensive refurbishment before the parts could be reused.

Yet machines that land vertically have been around for quite a while. Hanna Reitsch, the German test pilot, demonstrated the FW61 helicopter at a 1938 Berlin motor show and the ability to hover was also a feature of the first jet with vertical land capability, the Harrier.

The spaceflight pioneers of the 1940's and on had no use for recoverable launchers. Manned spaceflight technology was derived from nuclear missile technology and there was never any question of reusing a launcher in the case of a nuclear missile.

When the Space Shuttle was developed, the two solid fuel boosters were recovered by parachute and had to be fished out of the sea and then subjected to an expensive refurbishment before the parts could be reused.

Yet machines that land vertically have been around for quite a while. Hanna Reitsch, the German test pilot, demonstrated the FW61 helicopter at a 1938 Berlin motor show and the ability to hover was also a feature of the first jet with vertical land capability, the Harrier.

The Lunar Lander could also hover and this hovering business is quite a trick. It requires a propulsion system with a precise and responsive throttle control. By this I mean that the thrust can be adjusted in very fine increments and will change quickly in response to pilot commands.

The above video, BTW, shows Neil Armstrong getting into problems flying something called the Lunar Landing test vehicle. This was a rig designed for Apollo crews to practice their moon landings in. He runs into difficulties and ends up demonstrating why he was picked to be the first man on the moon.

In order to hover a machine such as the Lunar Lander or a Harrier with precision it is required to be able to reduce the amount of thrust as the fuel is consumed. The amount of thrust being produced must be equal, no more, than the weight of the machine plus its fuel load. And on the Harrier and the Lunar Lander the fuel was used up pretty quickly hence the throttle setting has to be continually reduced has long has the hover is maintained.

Rocket engine requirements tend to emphasise power in favour of controllability. And with space craft launchers the main issue is thrust, the engines are started up for lift off and tend to run flat out until all fuel is exhausted. And it turns out that the engines designed by SpaceX for the Falcon 9 launcher can not be throttled successfully to less than 50% of maximum thrust.

It's a characteristic of combustion engines that they have a relatively constrained range that they can operate reliably in. Reduce the fuel flow too much, by reducing the throttle setting, and they stand a good chance of quitting completely, or surging (producing too much thrust in pulses). In order to produce consistent thrust a certain minimum power setting has to be maintained.

In what one might consider a characteristic SpaceX approach, they have come up with a software solution to a hardware limitation.

With helicopters, the Lunar Lander and Harriers the landing technique is to first establishes a stable hover and then reduce the power gradually to touch down smoothly. But for SpaceX the lack ability to balance weight with thrust means that hovering is just impossible. By the time the recovering first stage has got to the touch down point, after following the profile above, it is so light that even 50% thrust from one of its nine engine would cause it to ascend.

In order to achieve the neat trick we see in the video at the top SpaceX have done something pretty audacious which no human pilot has ever been asked to achieve, this is the Hover Slam manoeuvre.

After the second stage and its payload have separated the first stage must be turned around and slowed down because at this stage it's almost going fast enough to get into orbit itself. Some of its engines are restarted and after slowing down the first stage starts its descent. It's still going faster than sound as it reenters the atmosphere and heat shielding is necessary to protect the main engines, which are now at the front in the direction of travel.

As it reenters the atmosphere steerable grid vanes, which can be seen in the video at the top of the page, provide some aerodynamic control. Grid vanes are used on guided 'smart' bombs and are robust and effective. At this point the launcher is basically falling out of the sky but the grid vanes allow the tube fuselage of the launcher to be positioned so that a little aerodynamic lift is produced. This allows the thing to track horizontally, towards the landing point.

As the last few hundred feet of altitude is lost the grid vanes position the launcher vertically. One of the engines is restarted, the descent slows and vertical velocity becomes zero just as the thing reaches the deck height of the recovery barge.

Of course, everything must be just right. Start the engine too early and the launcher would reach zero rate of descent too soon- while the thing was still above the landing deck. It would not touch down and it would continue to ascend until all the fuel was used up. Start the engine too late and it would be coming down too quickly at ground level. It would smash into the landing platform. In either case it ends in what Elon Musk likes to call RUD, Rapid Unscheduled Assembly.

In fact, as the video below shows, SpaceX are getting pretty good at getting things, just right!

Look closely at the video and at the end of the sequence you'll see the launcher pop up, the engine shut off and the launcher drop back down to the pad.

BTW. Saving all the deceleration to the very last instant before touch down, as in Hover Slam, also happens to be the most fuel efficient way of landing.

Technical details and more background on Hover Slam can be found on these links.

Subscribe to:

Posts (Atom)